Analyzing Evals

Quick Summary

Confident AI keeps track of your evaluation histories in both development and deployment and allows you to:

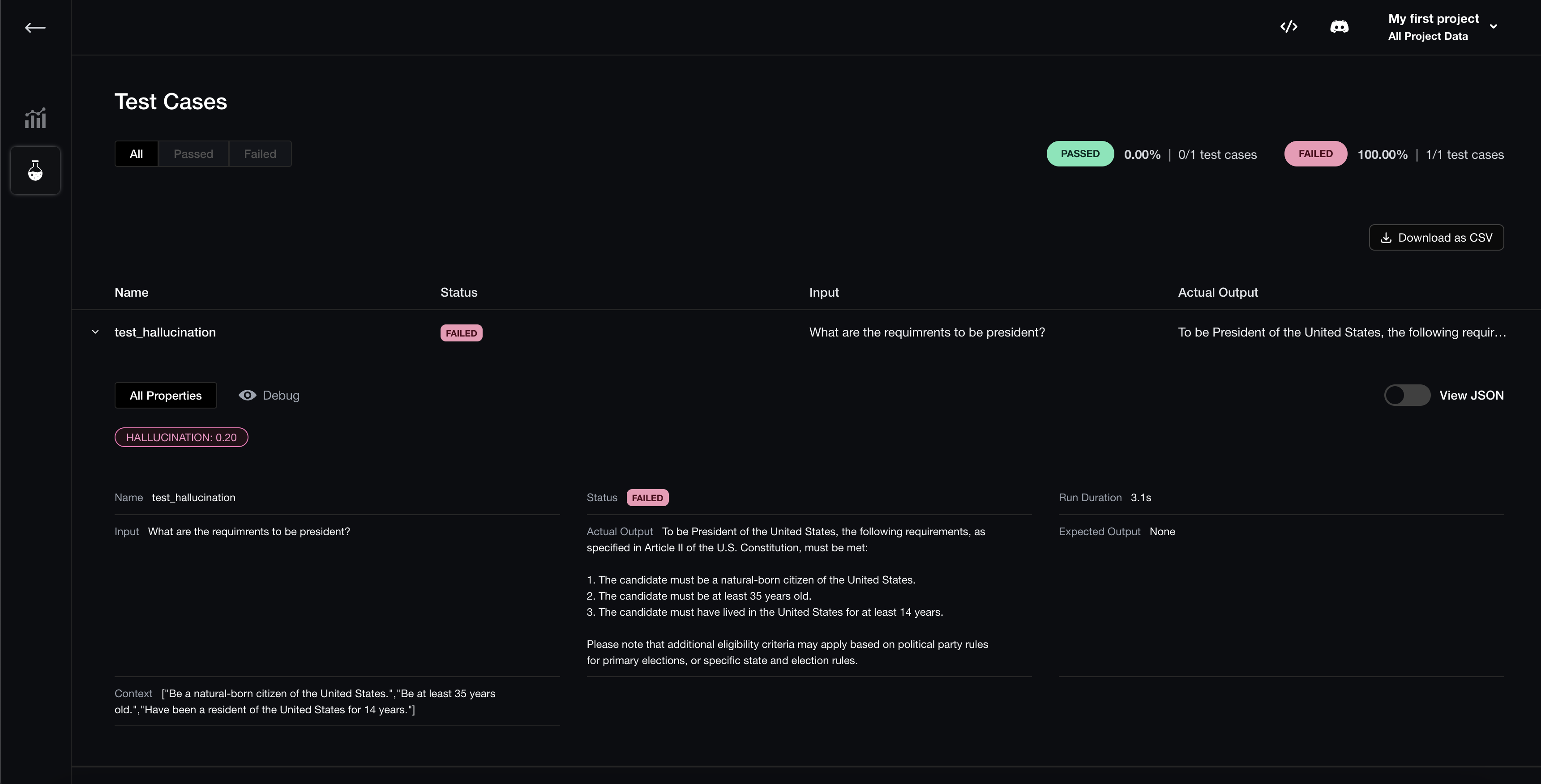

- visualize evaluation results

- compare and select optimal hyperparameters (eg. prompt templates, model used, etc.) for each test run

Visualize Evaluation Results

Once logged in via deepeval login, all evaluations executed using deepeval test run, evaluate(...), or dataset.evaluate(...), will automatically have their results available on Confident.

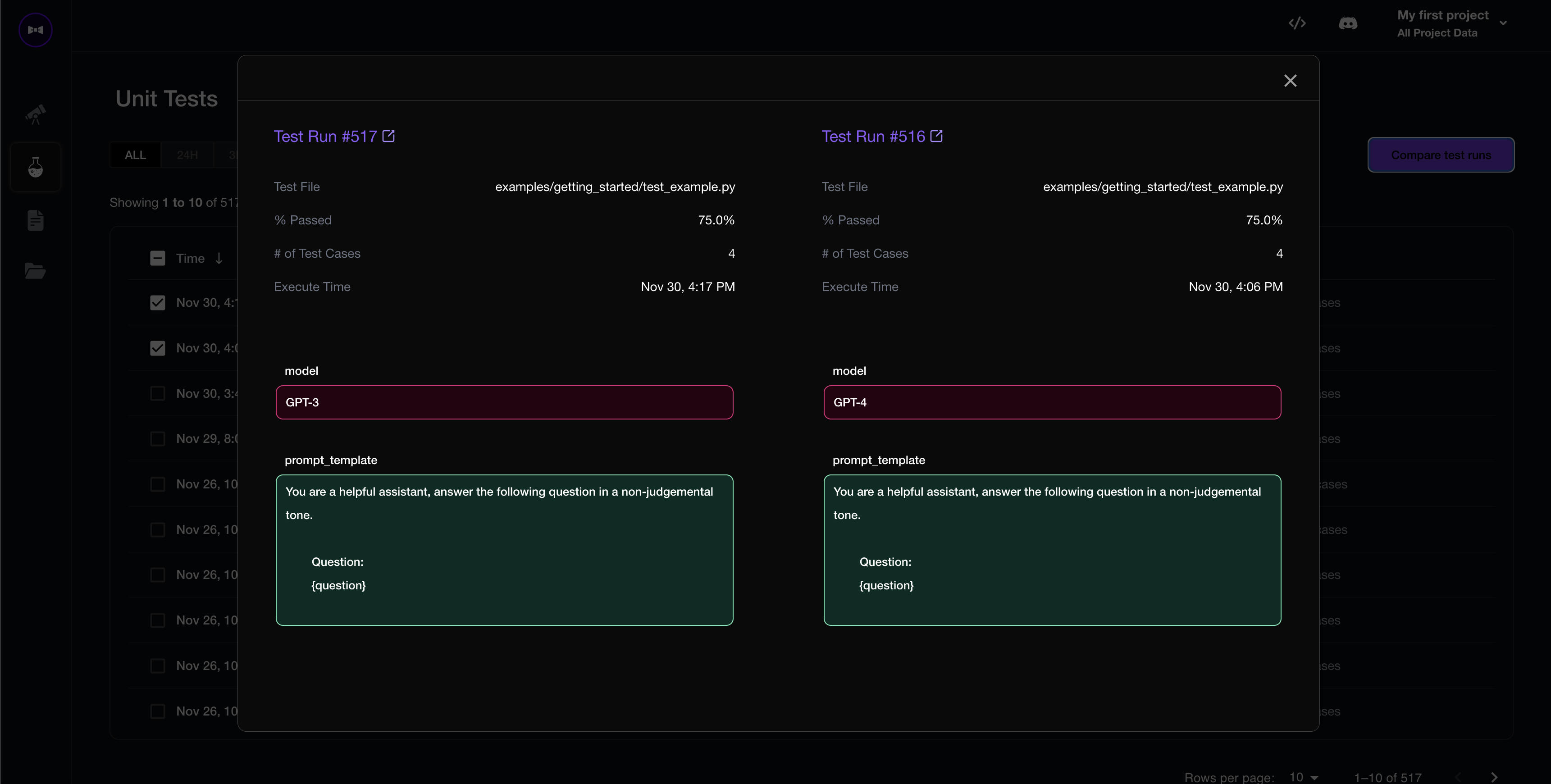

Compare Hyperparameters

Begin by associating hyperparameters with each test run:

import deepeval

from deepeval import assert_test

from deepeval.metrics import HallucinationMetric

def test_hallucination():

metric = HallucinationMetric()

test_case = LLMTestCase(...)

assert_test(test_case, [metric])

# Just an example of prompt_template

prompt_template = """You are a helpful assistant, answer the following question in a non-judgemental tone.

Question:

{question}

"""

# Although the values in this example are hardcoded,

# you should ideally pass in variables to keep things dynamic

@deepeval.log_hyperparameters(model="gpt-4", prompt_template=prompt_template)

def hyperparameters():

# Any additional hyperparameters you wish to keep track of

return {

"chunk_size": 500,

"temperature": 0,

}

You MUST provide the model and prompt_template argument to log_hyperparameters in order for deepeval to know which LLM and prompt template you're evaluating.

This only works if you're running evaluations using deepeval test run. If you're not already using deepeval test run for evaluations, we highly recommend you to start using it.

That's all! All test runs will now log hyperparameters for you to compare and optimize on.